代码实现

从这张中可以看出,总收益上SICmomentum策略要低于TNICmomentum策略,在金融危机期间,SICmomentum策略也要逊于TNICmomentum策略,我们可以理解为SICpeers的相关性非常高,一个公司对另一个公司产生影响的传播时间非常的短暂,因此很难把握这种趋势。同时在金融危机期间,相关性非常高的peers代表的均为这个行业的整体性趋势,因此在受到系统性的冲击时将会出现同步的表现,这与本文的研究结果一致。

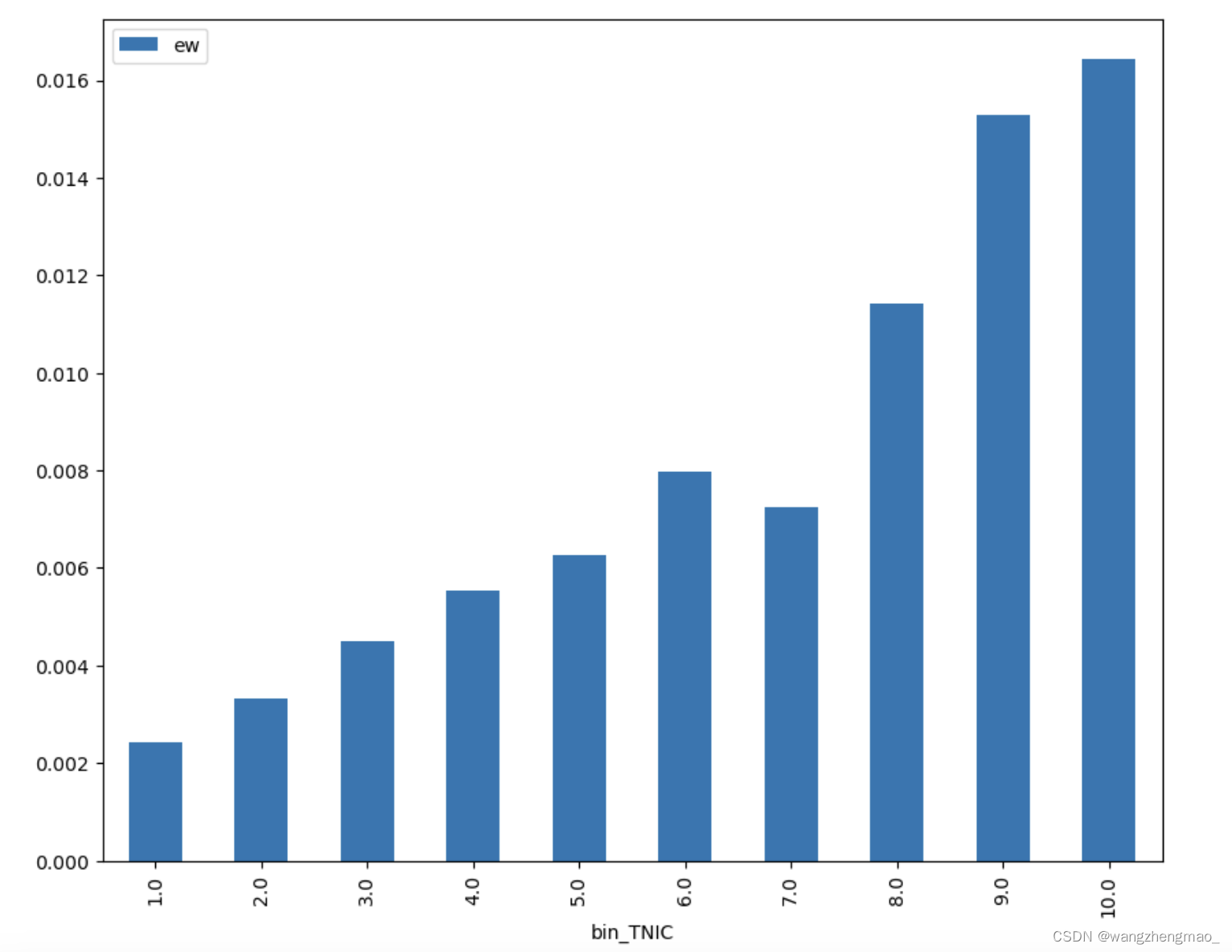

dta = portfolios_TNIC.groupby("bin_TNIC").agg(ew=("portfolio_TNIC_ew", "mean")).sort_values("bin_TNIC")

plt.rcParams["figure.figsize"] = (10,8)

dta.plot(kind="bar")

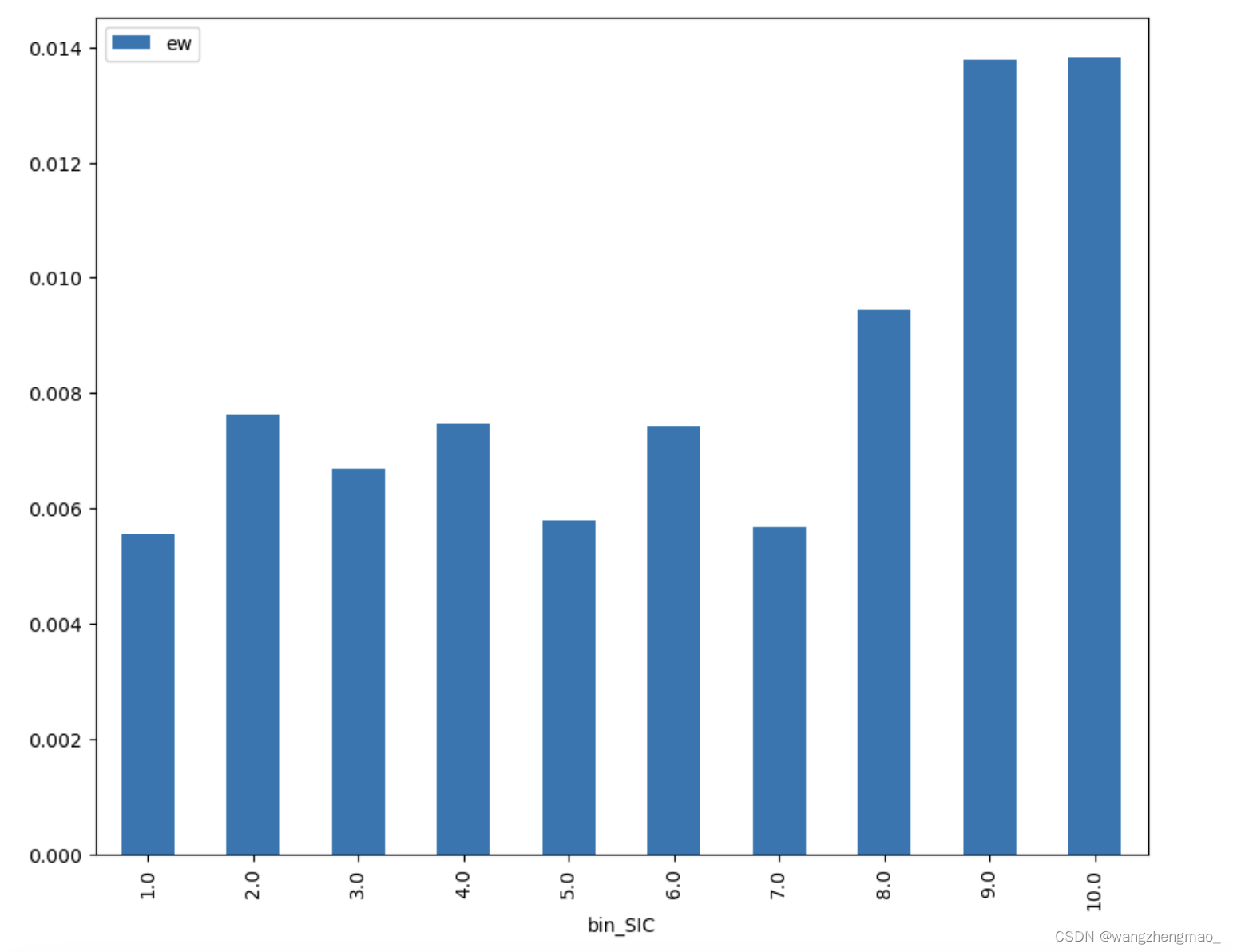

dta = portfolios_SIC.groupby("bin_SIC").agg(ew=("portfolio_SIC_ew", "mean")).sort_values("bin_SIC")

plt.rcParams["figure.figsize"] = (10,8)

dta.plot(kind="bar")

构建投资组合

两位教授基于text-based分析,来寻找公司的同类企业,我们可以简单的理解为他们对比公司的各种公开报告,来进行相似度评分以此获得的一家公司的peers。可以看到通过这样的方式,一家公司的同类企业将会包含一些联系性并不是很强的公司,因为仅仅是报告文本中的相似性语句就会被判定出相似性,那么两家‘同类型’公司甚至可能属于不同的地区生产不同的产品。我们可以理解为如果两家公司的相似性不是很强的情况下,其中一家公司股价产生了变化将会需要更长的时间传递到另一家peer公司,这样我们根据相似度加权获得新的TNICmomentum来构建投资组合将会更容易捕捉到变化趋势,那么也将会产生持续时间更长并且更高的收益。

data_TNIC的结果如下所示,permno表示不同公司代码,permno2表示permno的peers,同时我们要注意同一家公司不同年份的peers可能是不同的,相似度的分数也可能是不同的,这一点在后面处理数据的时候要格外注意。

data_1["prc_lag1"] = data_1.groupby(["permno"])["prc"].shift(1)

data_1["mcap"] = np.abs(data_1["prc"] * data_1["shrout"])

data_1["logmcap"] = data_1["mcap"].apply(np.log)

data_1["logmcap"].replace((-np.inf),0,inplace = True)

data_1["mcap_lag1"] = data_1.groupby(["permno"])["mcap"].shift(1)

data_1["ret_lag2"] = data_1.groupby(["permno"])["ret"].shift(2)

data_1["prc_lag1"] = data_1.groupby(["permno"])["prc"].shift(1).abs()

data_1["prc_lag13"] = data_1.groupby(["permno"])["prc"].shift(13).abs()

data_1["retplus1"] = data_1["ret"]+1

data_2 = duckdb.sql("""

SELECT *

FROM data_1

WHERE (prc_lag1 >= 5)

""").fetchdf()

data_2.sort_values(by = ["permno","date"],inplace = True)

data_2.reset_index(drop = True,inplace = True)由于数据量庞大,该函数的运行时间较长,并且由于此过程过于复杂,目前还没有找到通过SQL来实现相同功能的办法,我会继续尝试使用SQL语句来实现该功能。

#It will take almost 15mins to run this part.

final=[]

for year in range(1997,2013):

result = find_momentum(year)

final.append(result)

print(f"{year} Done")

df_1997_2012 = pd.concat(final)

df_1997_2012

#This is what we get relating to TNIC momentum.该部分数据处理是为了和创新的TNICmomentum进行对比,来观察两者优劣。

data_2["SIC_similarity"] = data_2["hsiccd"].apply(lambda x: str(x)[:4])

data_3 = data_2

data_3.drop_duplicates(inplace=True)

data_3.dropna(inplace = True)

firms = data_3["permno"]

firms.drop_duplicates(inplace=True)

firms = list(firms)

data_3["year-month"]=data_3["year"]+data_3["month"]

result = []

for firm in firms:

groups = data_3.groupby("permno")

df = groups.get_group(firm)

df = df[["momentum","year-month"]].set_index("year-month").T

result.append(df)

df_final = pd.concat(result)

df_final.reset_index(drop=True, inplace = True)

df_firms=pd.DataFrame(firms)

df_final=pd.merge(df_final_1,df_firms, left_index=True,right_index=True)

df_final.rename(columns = {0:"permno"},inplace = True)

df_firms=pd.DataFrame(firms)

df_firms.rename(columns={0:"permno"},inplace=True)

df_firms = df_firms.merge(data_3[["permno","SIC_similarity"]],how="left",on="permno")

df_firms.drop_duplicates(inplace=True)

df_firms.reset_index(drop=True,inplace=True)

result=[]

for i in range(len(firms)):

firm = df_firms.iloc[i,0]

SIC = df_firms.iloc[i,1]

groups = df_firms.groupby("SIC_similarity")

a=groups.get_group(SIC)

a=a[a["permno"]!=firm]

a=a.merge(df_final,on="permno")

a=pd.DataFrame(a.iloc[:,2:].mean())

a.reset_index(inplace=True)

a["permno"]=firm

a.columns=["year-month","result","permno"]

result.append(a)

result = pd.concat(result)

data_final=pd.merge(data_3,result,how="left",on=["permno","year-month"])根据相似度加权得到新的TNICmomentum

该函数目的是将同一时间的公司按照momentumsignal从小到大排序并分成10部分,前10%的binnumber为后10%的binnumber为以方便我们了解哪些股票标的需要做空,哪些股票需要做多。

def apply_quantiles(x, include_in_quantiles=None, bins=10):

# if the argument is specified, we only include some data in the calculate of breakpoints

if include_in_quantiles is None:

include_in_quantiles = [True] * len(x)

# calculate quantiles (breakpoints)

x = pd.Series(x)

quantiles = np.quantile(

x[x.notnull() & include_in_quantiles],

np.linspace(0, 1, bins+1)

)

quantiles[0] = x.min() - 1

quantiles[-1] = x.max() + 1

# cut the data a bit more

return pd.cut(x, quantiles, labels=False) + 1

df_1997_2012.rename(columns = {"result":"TNIC_momentum"},inplace = True)

data_final.rename(columns = {"result":"SIC_momentum"},inplace = True)

df_1997_2012["bin_TNIC"] = (

df_1997_2012

.groupby("date")

.apply(lambda group: apply_quantiles(group["TNIC_momentum"], bins=10))

).reset_index(level=[0], drop=True).sort_index()

data_final["bin_SIC"] = (

data_final

.groupby("date")

.apply(lambda group: apply_quantiles(group["SIC_momentum"], bins=10))

).reset_index(level=[0], drop=True).sort_index()

#TNIC portfolio will be build base on df_1997-2012

portfolios_TNIC = (

df_1997_2012

.groupby(["date", "bin_TNIC"])

.apply(

lambda g: pd.Series({

"portfolio_TNIC_ew": g["ret"].mean(),

"portfolio_TNIC_vw": (g["ret"] * g["mcap_lag1"]).sum() / g["mcap_lag1"].sum()

})

)

).reset_index()

#SIC portfolio will be build base on data_final

portfolios_SIC = (

data_final

.groupby(["date", "bin_SIC"])

.apply(

lambda g: pd.Series({

"portfolio_SIC_ew": g["ret"].mean(),

"portfolio_SIC_vw": (g["ret"] * g["mcap_lag1"]).sum() / g["mcap_lag1"].sum()

})

)

).reset_index()

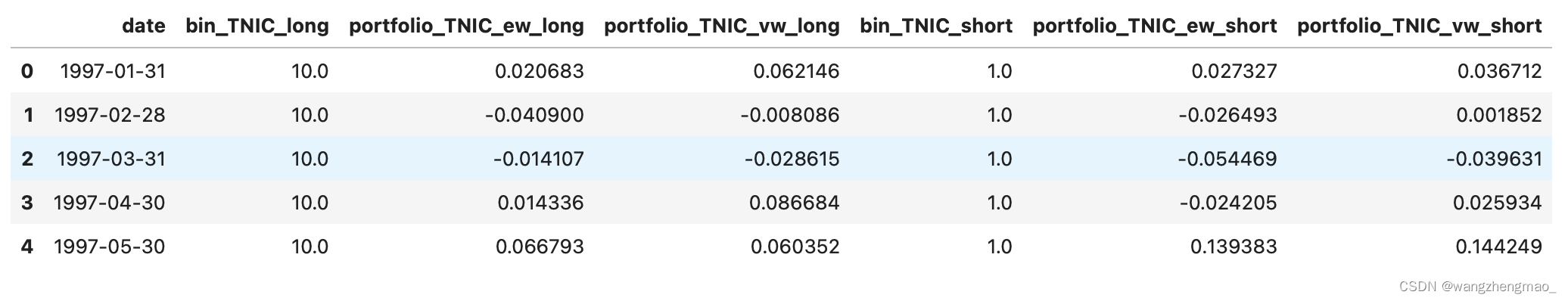

portfolios2_TNIC = pd.merge(

portfolios_TNIC.query("bin_TNIC==10"),

portfolios_TNIC.query("bin_TNIC==1"),

suffixes=["_long", "_short"],

on="date"

)

portfolios2_SIC = pd.merge(

portfolios_SIC.query("bin_SIC==10"),

portfolios_SIC.query("bin_SIC==1"),

suffixes=["_long", "_short"],

on="date"

)‘’Wetestthehypothesisthatlowvisibilityshockstotext–basednetworkindustrypeerscanexplainindustrymomentum.Weconsiderindustrypeerfirmsidentifiedthrough10–KproducttextandfocusoneconomicpeerlinksthatdonotsharecommonSICcodes.Shockstolessvisiblepeersgenerateeconomicallylargemomentumprofits,andarestrongerthanown–firmmomentumvariables.MorevisibletraditionalSIC–basedpeersgenerateonlysmall,short–livedmomentumprofits.Ourfindingsareconsistentwithmomentumprofitsarisingpartiallyfrominattentiontoeconomiclinksoflessvisibleindustrypeers.‘’

至此,我们对于数据的预处理部分基本完成。

本文根据GerardHoberg,GordonPhillips两位教授的论文,使用Python复现其量化策略。在此之前之前我们使用传统的SIC进行行业分类,来寻找一家公司的同类型企业,然后根据同类企业的平均加权momentum作为新的SICmomentum,但是在实践过程中他们发现以这种方式构建的投资组合所创造的收益较小并且持续的时间很短。因为根据SIC分类的同类企业相似性非常高,一家企业股票价格发生变化,同类企业在较短的时间内就会做出反应,而momentum作为传统的趋势性策略就很难追踪到这种较短时间的变化,或者说即使能够追踪到,那么由于我们需要时间构建投资组合,因此将难以获得最大化的投资收益。

本文使用Python复现了Text–BasedIndustryMomentum量化策略,从最终结果来看符合论文的结论,根据TNICmomentum构建投资策略最终的收益表现要优于根据传统SICmomentum构建的投资组合。由于缺少部分数据,本文并未对论文中的highdisparity策略进行复现,同时由于数据庞大,Python代码运行时间较长,目前我也在尝试使用SQL来进行数据处理,以加快运行速度。

至此我们已经获得每一个月我们的策略做多和做空股票的收益情况,然后我们将作来观察复合收益是否表现更好。

由于crsp.msf文件中的hsiccd即为SIC,因此需要先构建出一个文件来存储SICpeers然后再进一步加权计算SICmomentum。同理这部分代码也需要较长时间运行。

根据SIC加权得到传统的SICmomentum

投资策略复合收益

portfolios2_TNIC["strategy_TNIC_vw"] = portfolios2_TNIC["portfolio_TNIC_vw_long"] - portfolios2_TNIC["portfolio_TNIC_vw_short"]

portfolios2_TNIC["strategy_TNIC_ew"] = portfolios2_TNIC["portfolio_TNIC_ew_long"] - portfolios2_TNIC["portfolio_TNIC_ew_short"]

portfolios2_TNIC["cum_TNIC_vw"] = (portfolios2_TNIC["strategy_TNIC_vw"] + 1).cumprod() - 1 # calculates the cumulative return

portfolios2_TNIC["cum_TNIC_ew"] = (portfolios2_TNIC["strategy_TNIC_ew"] + 1).cumprod() - 1 # calculates the cumulative return

(

portfolios2_TNIC

.assign(date=pd.to_datetime(portfolios2_TNIC["date"]))

.assign(cum_TNIC_vw=portfolios2_TNIC["cum_TNIC_vw"]+1)

.assign(cum_TNIC_ew=portfolios2_TNIC["cum_TNIC_ew"]+1)

.plot(x="date", y=["cum_TNIC_ew", "cum_TNIC_vw"], logy=True).grid(axis="y")

)portfolios2_SIC["strategy_SIC_vw"] = portfolios2_SIC["portfolio_SIC_vw_long"] - portfolios2_SIC["portfolio_SIC_vw_short"]

portfolios2_SIC["strategy_SIC_ew"] = portfolios2_SIC["portfolio_SIC_ew_long"] - portfolios2_SIC["portfolio_SIC_ew_short"]

portfolios2_SIC["cum_SIC_vw"] = (portfolios2_SIC["strategy_SIC_vw"] + 1).cumprod() - 1 # calculates the cumulative return

portfolios2_SIC["cum_SIC_ew"] = (portfolios2_SIC["strategy_SIC_ew"] + 1).cumprod() - 1 # calculates the cumulative return

(

portfolios2_SIC

.assign(date=pd.to_datetime(portfolios2_SIC["date"]))

.assign(cum_SIC_vw=portfolios2_SIC["cum_SIC_vw"]+1)

.assign(cum_SIC_ew=portfolios2_SIC["cum_SIC_ew"]+1)

.plot(x="date", y=["cum_SIC_ew", "cum_SIC_vw"], logy=True).grid(axis="y")

)以下构建的函数实现了对于新的TNICmomentum的加权计算过程

#This function is to get TNIC momentum per year for all permno.

def find_momentum(year):

df_file_1997 = data_2[data_2["year"]==str(year)]

df_TNIC_1997 = data_TNIC[data_TNIC["year"]==year]

firms = df_TNIC_1997["permno2"]

firms.drop_duplicates(inplace=True)

firms = list(firms)

result = []

for i in firms:

a=df_file_1997[df_file_1997["permno"]==i][["momentum","month"]]

a=a.set_index("month")

result.append(a)

result=pd.concat(result,axis=1)

df_firms = pd.DataFrame(firms)

df_final = pd.concat([result.T.reset_index(drop = True),df_firms],axis=1)

df_final.rename(columns={0:"permno2"},inplace=True)

df_1997=pd.merge(df_TNIC_1997,df_final,how="left",on="permno2")

groups = df_1997.groupby("permno")

firms = df_1997["permno"]

firms.drop_duplicates(inplace=True)

firms = list(firms)

final = []

for i in firms:

result = []

a=groups.get_group(i)

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["01"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["02"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["03"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["04"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["05"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["06"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["07"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["08"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["09"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["10"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["11"]))

result.append(np.sum(a["similarity_score"]/(np.sum(a["similarity_score"]))*a["12"]))

result = pd.DataFrame(result)

result["permno"]=i

final.append(result)

final=pd.concat(final)

final_1=final.reset_index()

final_1.columns=["month","result","permno"]

final_1["index"]=final_1["month"]+1

df_file_1997["index"]=df_file_1997["month"].apply(lambda x: int(x))

df_final=pd.merge(df_file_1997,final_1[["permno","index","result"]],on=["permno","index"],how="left")

return df_final由于数据量太大,这里使用了SQL语句导入数据并进行简单的条件过滤以缩短处理数据的时间。

data_crsp = duckdb.read_parquet(r"C:crsp.msf.parquet")

data_hoberg = duckdb.read_parquet(r"C:hoberg_cross_firm_similarity_permno.parquet")

#In this notebook, data_hoberg is TNIC industry simimlarity.

data_1 = duckdb.sql("""

SELECT permno,hexcd,hsiccd,date,prc,ret,shrout

FROM data_crsp

WHERE (date between"1995-01-01" and "2012-12-31")

and (hexcd == 1.0 or 2.0 or 3.0)

""").fetchdf()

#The paper requires the data between 1997~2012, so all the data will be import from this period.

#To begin in 1995-01-01 is to ensure all the momentum and lag_ret data is valid during 1997~2012.

data_TNIC = duckdb.sql("""

SELECT *

FROM data_hoberg

WHERE year between"1997" and "2012"

""").fetchdf()

data_1.dropna(inplace = True)

data_1.sort_values(by = ["permno","date"],inplace = True)

data_1.reset_index(drop = True,inplace = True)

data_TNIC.dropna(inplace = True)

data_TNIC.sort_values(by = ["permno1","year"],inplace = True)

data_TNIC.reset_index(drop = True,inplace = True)

data_TNIC.rename(columns = {"permno1":"permno"},inplace = True)

以上是基于逻辑层面的简单分析,接下来我们将通过数据进行验证,本文所需要的数据为crsp.msf.parquet,hoberg_cross_firm_similarity_permno.parquet。

导入所需函数

import duckdb

import pandas as pd

import numpy as np

import warnings

warnings.filterwarnings("ignore")

from IPython.core.interactiveshell import InteractiveShell

import matplotlib.pyplot as plt

import statsmodels.api as sm

import statsmodels.formula.api as smf对数据进行预处理

构建函数计算传统的momentum

def rolling_prod(a, n=11) :

ret = np.cumprod(a.values)

ret[n:] = ret[n:] / ret[:-n]

ret[:n-1] = np.nan

return pd.Series(ret, index=a.index)

data_2["rollret_11"] = (

data_2

.assign(ret=(data_2["retplus1"].fillna(0)+1)) # add 1

.groupby("permno")["retplus1"]

.apply(rolling_prod)

) - 1

data_2["rollret_11_lag1"] = data_2.groupby("permno")["rollret_11"].shift(1)

data_2["momentum"] = data_2.groupby("permno")["rollret_11"].shift(2)

data_2 = duckdb.sql("""

SELECT *

FROM data_2

WHERE (date between"1997-01-01" and "2012-12-31")

""").fetchdf()

data_2["year"] = data_2["date"].apply(lambda x: str(x)[:4])

data_2["month"] = data_2["date"].apply(lambda x: str(x)[5:7])

data_2[["momentum"]].fillna(0,inplace = True)

data_2.dropna(inplace = True)

data_2.reset_index(drop = True, inplace = True)

文章为作者独立观点,不代表股票交易接口观点